A quick intro to Kafka along with a demo

What is Kafka?

Kafka is an open-source distributed event streaming platform. It is written in Java and Scala and was originally developed at LinkedIn. Today, it is being run and maintained by Confluent. Kafka has received a wide adoption in the industry. In fact, a fun fact from the official homepage says that “More than 80% of all Fortune 100 companies” use Kafka! That’s a huge number and it already gets us thinking as to what makes Kafka so special.

Some of the main highlights of Kafka is that it is truly optimised for scalability (especially horizontal scaling). It also offers high throughput and high availability. Hence, it is really suitable for data/event heavy applications and services.

I will aim to cover the absolute basic overview of Kafka in this article without adding any complex jargons. I will also show a demo of Kafka running locally on my computer (macOS).

For deeper reading, Stanislav Kozlovski has written a thorough article here. Do check it out!

The Problem

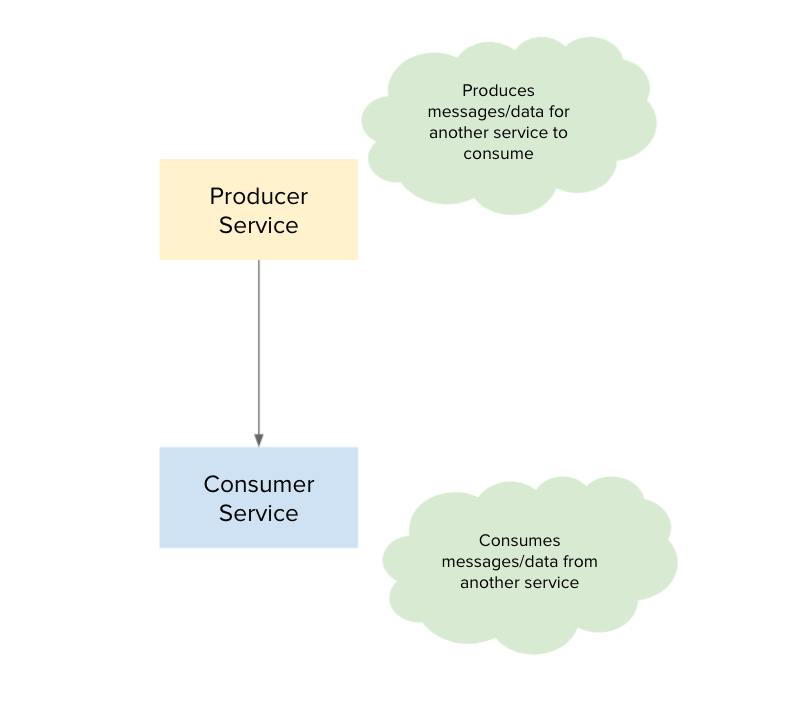

Let’s imagine that you are creating an application. You start off with a producer service which produces data or messages. We also have a corresponding consumer service, whose job is to wait for events from the producer and react to that.

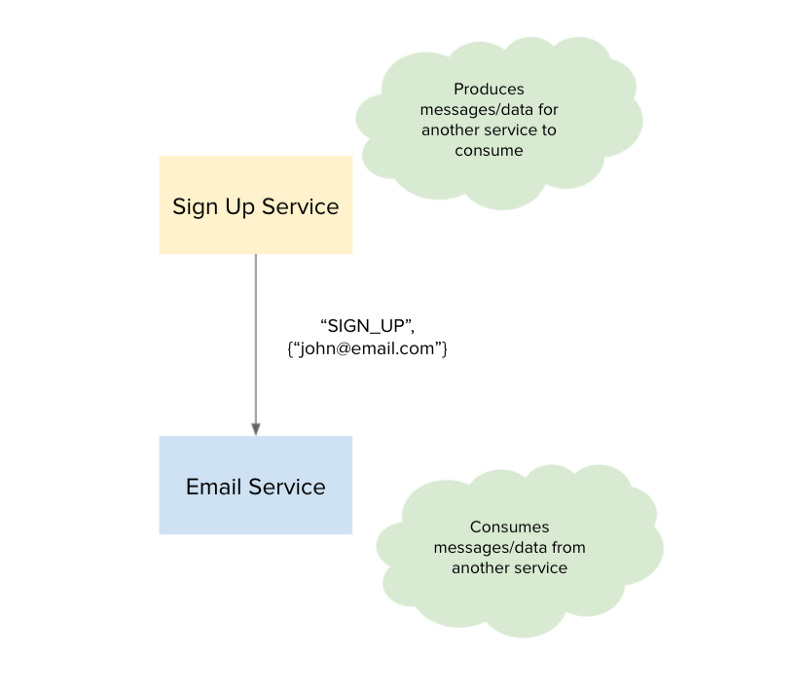

Let’s imagine this in a simple context. It’s typical of an application to offer sign up capabilities and following a sign up, we send a welcome email to the new user. For this use case, we may have two services respectively: a sign-up service and a email service. These two services correspond to being a producer and a consumer respectively.

For example, when a user John signs up to our application with his email (e.g. “john@email.com” the following actions happen:

Sign up service registers and stores the new user in the database

Sign up service fires an event “SIGN_UP” with a payload containing the email of the user to the email service

Email service captures this event and unpacks the payload

Emails service sends a welcome email to John

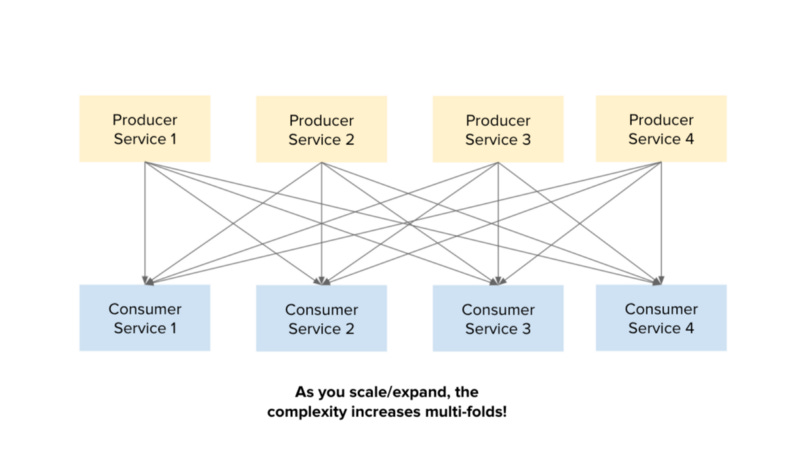

Now, that was pretty straightforward. However, what happens when we have more services. Let’s assume the application grows and we choose to adopt a microservice architecture. The number of producer and consumer services will likely increase as well.

Think about this scenario where each producer needs to communicate an event to all other consumers. In this case, it will need to repeat itself to each consumer. As the number of producers and consumers increase, the interconnections and communications between the services increase multi-fold!

So this brings us to the question:

How can we better enable the sharing of data/events?

The Solution

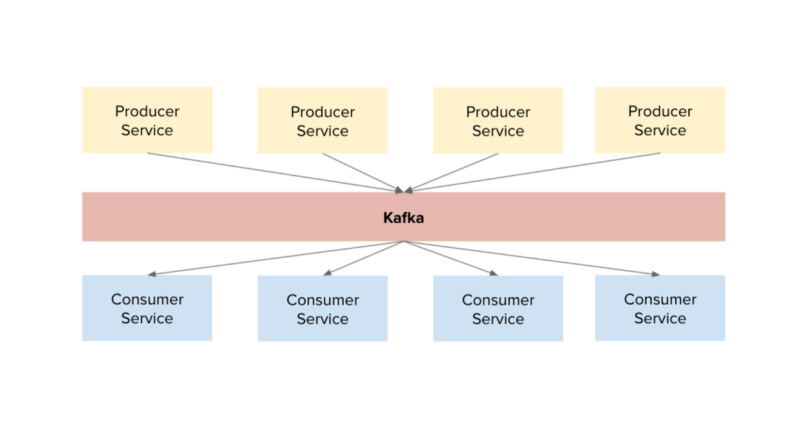

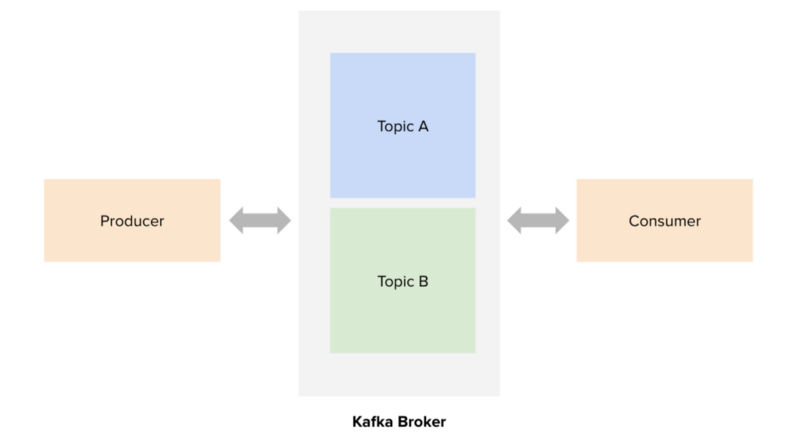

And this is what Kafka comes in. Kafka turns into the message broker for these services and producers are now able to “stream” data and events to Kafka.

Our new architecture looks so much more cleaner. All the producer and consumer services communicate with the Kafka service. Essentially, if you are familiar with architectural patterns, we are implementing a mediator pattern by introducing an intermediary service.

With a Kafka broker, producers can publish to a specific topic in the broker. Going back to the sign up example, the sequence of events will be:

Sign up service (producer) publishes event “SIGN_UP” to the topic “emails” with a payload data containing the new email (e.g. john@email.com)

Email service (consumer) is subscribed to listen to the topic “emails”

Email service notices that there is a new event in Topic A

Email service consumes this new event and unpacks the payload

Email service sends the welcome email

Similarly, if there are more consumers which need to listen to the same topic “emails”, they can do that through the Kafka broker.

Demo on macOS

Setup with Homebrew

Firstly, we will install all the required libraries with Homebrew, which is a package manager for macOS. Visit brew.sh to install Homebrew if you do not already have it installed.

Next, execute the following commands to install Kafka and Zookeeper.

brew install kafka

brew install zookeeperKafka uses Zookeeper to manage service discovery for Kafka Brokers that form the cluster. Zookeeper sends changes of the topology to Kafka, so each node in the cluster knows when a new broker joined, a Broker died, a topic was removed or a topic was added, etc.

Start the Server

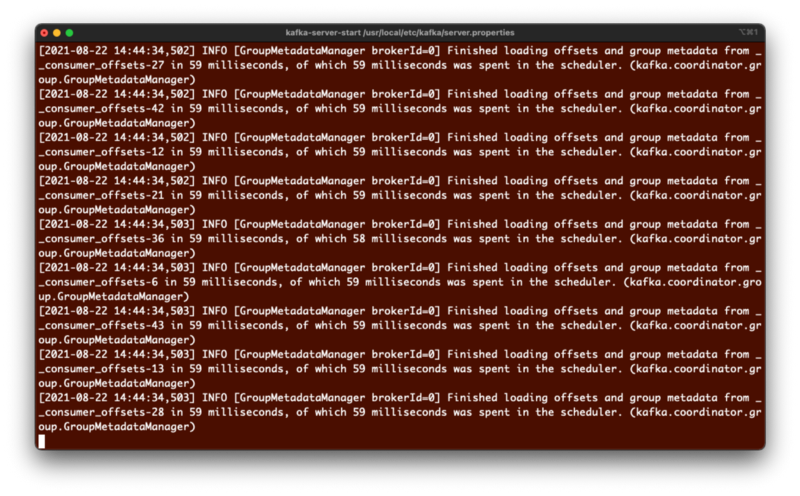

Next, let’s start the server with these 2 commands:

zkServer start

kafka-server-start /usr/local/etc/kafka/server.propertiesYou should an output like this:

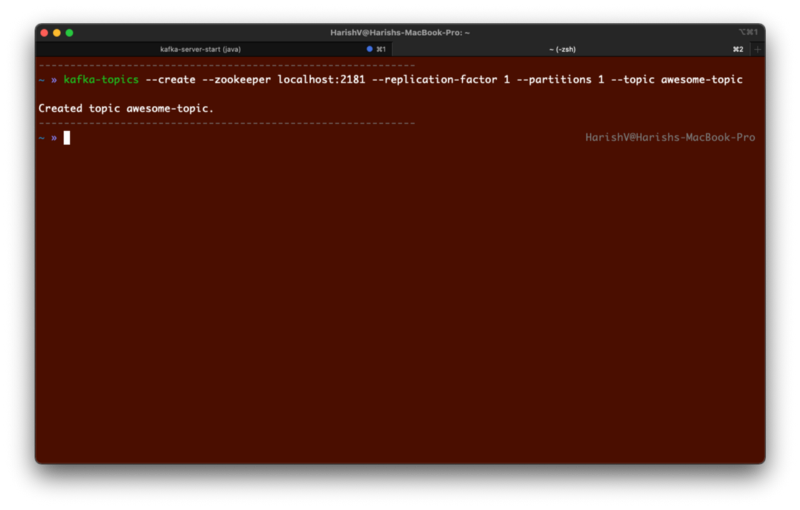

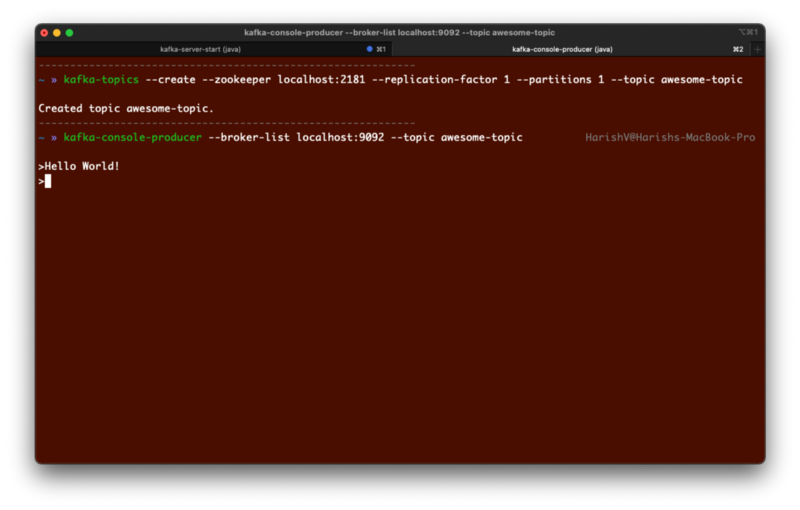

Create a Topic

Now, we will create a topic for us to publish and receive events. Let’s call this topic “awesome-topic”.

The earlier server will be running in the background. So, let’s create a new terminal to create a topic:

kafka-topics --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic awesome-topicCreate a Producer

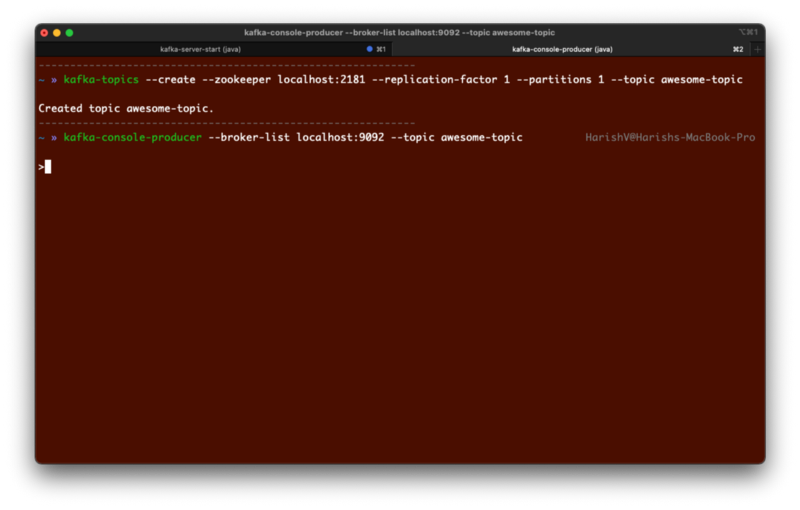

Now, we will create a producer service which publishes to the topic “awesome-topic” with this command:

kafka-console-producer --broker-list localhost:9092 --topic awesome-topicIt will just wait for your input to send some data. We can send a simple message like “Hello World!”.

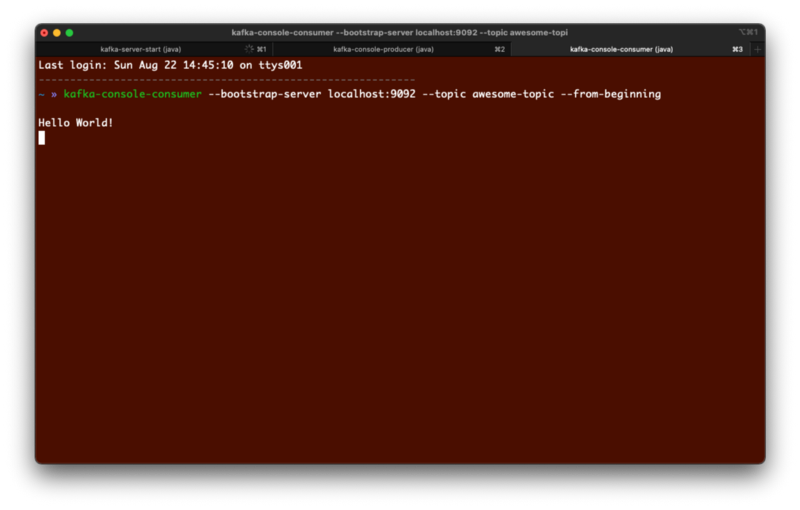

Create a Consumer

Now, we will create a consumer service listening to the topic “awesome-topic”.

kafka-console-consumer --bootstrap-server localhost:9092 --topic awesome-topic --from-beginningIf you execute the command, you will immediately see the message you sent from your producer above. This means that the consumer service is created successfully and has consumed the data we sent to our topic, i.e. awesome-topic.

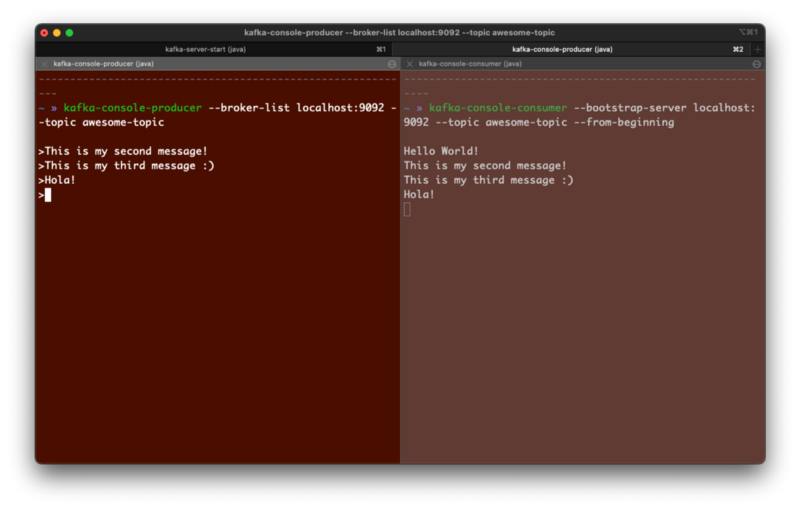

Sending and Receiving Data

Putting these 2 windows side-by-side, you can send messages from the producer to the Kafka broker for awesome-topic.

Simultaneously, you would see that your consumer service will receive it from the Kafka broker as it is subscribed to awesome-topic.

Conclusion

Kafka can be an incredibly powerful solution for your applications. Acting as a mediator or a message broker, Kafka can enable applications to scale effectively. For example, it can support over 100s of brokers. Kafka also allows for high throughput of data (in the range of millions). And the low latency it offers enable us to create real-time applications. This is a reason for its high adoption rate, especially by big names such as LinkedIn, Netflix, Uber etc.

As next steps, you might be interested to learn even deeper into how Kafka works. Timothy Stepro has written and illustrated Kafka in a beautiful manner in this article. And if you’re confused between Kafka and other message queues services like RabbitMQ, do check out this article by Eran Stiller for a head-to-head comparison between the two!

Happy hacking! 💻